What Is the Elephant Herding Optimization (EHO) Algorithm?

Elephant Herding Optimization (EHO) is a population-based metaheuristic inspired by the social behavior of elephant herds. In nature, elephants live in clans led by a matriarch (the oldest female), and male elephants eventually leave the family group to roam independently. EHO abstracts these two core behaviors—clan updating (social learning around a matriarch) and separating (reinitializing some individuals to explore new regions)—to search efficiently for optimal (or near-optimal) solutions in complex optimization landscapes.

Like other swarm-intelligence methods (e.g., PSO, ACO), EHO iteratively improves a set of candidate solutions (the “elephants”) by mixing exploitation (moving toward the best solutions found so far) with exploration (diversifying the search to avoid local minima). Its hallmark features are (1) regrouping solutions into clans with a matriarch guiding movement and (2) a periodic reset (separating operator) for some of the worst solutions to push the search into fresh territory.

Introduction to EHO

Optimization problems appear in engineering design, machine learning hyperparameter tuning, scheduling, network routing, finance, energy systems, and more. Many of these problems are non-convex and multi-modal, making classical gradient-based optimizers unreliable or inapplicable—especially when derivatives are unavailable or noisy.

EHO is an attractive option because:

- It lends itself to parallelism: fitness evaluations and clan operations can be processed in parallel.

- It’s simple to implement and tune (few parameters).

- It balances intensification (learn from the matriarch/elite solutions) with diversification (separate and reinitialize weak solutions).

- It’s flexible: works for continuous, discrete, and constrained problems with modest adaptations.

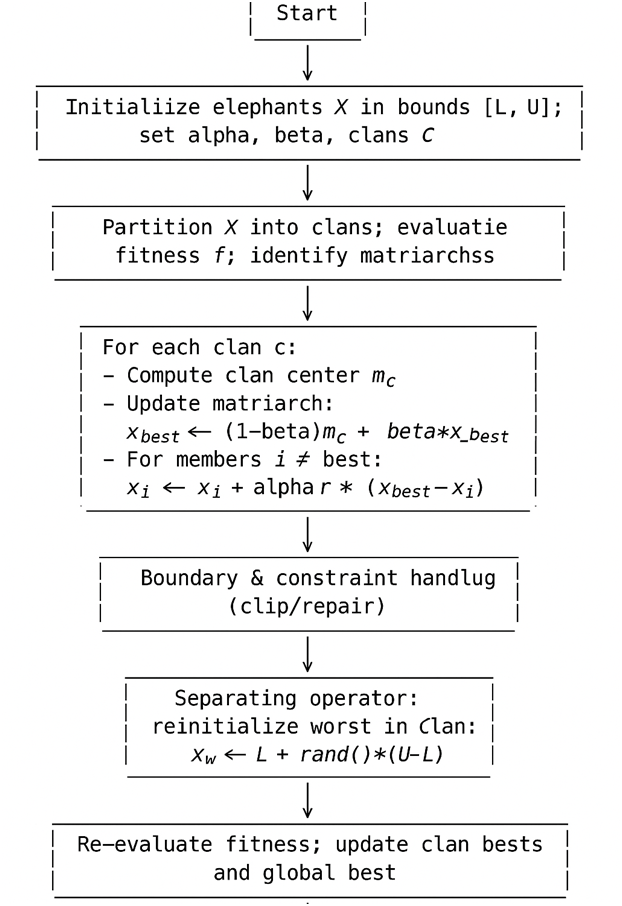

At a high level, EHO works as follows: initialize a swarm of elephants (candidate vectors), split them into clans, evaluate fitness, update each clan by moving members toward their matriarch (and/or clan center), occasionally reinitialize some poorly performing individuals (separating), enforce bounds and constraints, and repeat until a stopping condition is met.

Pseudocode

Input: Objective f(x), bounds L, U, population size N, clans C,

alpha (learning rate), beta (matriarch blend),

max_iter, sep_rule (e.g., worst-per-clan)

1: Initialize population X randomly in [L, U]; split into C clans

2: Evaluate fitness f(X); identify clan bests and global best

3: for t = 1 to max_iter do

4: for each clan c do

5: Compute clan center m_c

6: // Update matriarch

7: x_best(c) <- (1 - beta) * m_c + beta * x_best(c)

8: // Update members

9: for each i in clan c, i != best do

10: r ~ U(0,1)^D

11: x_i <- x_i + alpha * r .* (x_best(c) - x_i)

12: end for

13: end for

14: // Boundary/constraint handling

15: X <- clip_or_repair(X, L, U, constraints)

16: // Separating operator

17: for each clan c do

18: w <- index of worst in clan c

19: x_w <- L + rand() .* (U - L)

20: end for

21: Evaluate f(X); update clan bests and global best

22: if stopping_condition_met then break

23: end for

24: return x_gbest, f(x_gbest)

The EHO Algorithm

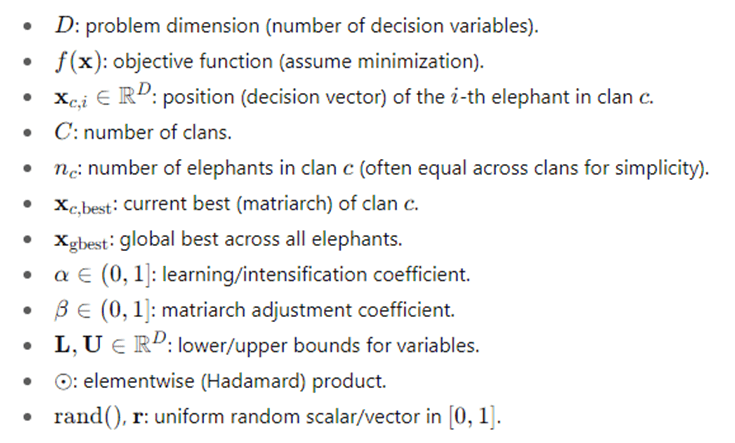

Notation

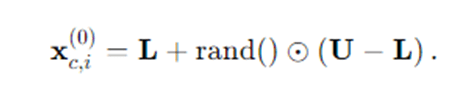

Initialization

Randomly sample the initial population within bounds:

Partition into C clans (e.g., round-robin or random split). Compute fitness f(xc,i) for all elephants and identify both clan bests and the global best.

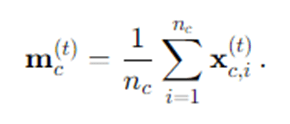

Clan Center

For each clan c, compute its center (mean position):

Matriarch (Clan Leader) Update

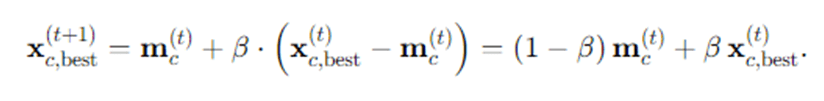

The matriarch is the current best elephant in the clan. EHO often updates the matriarch toward the clan center for stabilization:

If β=0, the matriarch snaps to the clan center. If β=1, the matriarch stays where she is. Typical β is somewhere in between, mildly smoothing extremes.

Clan Member Update

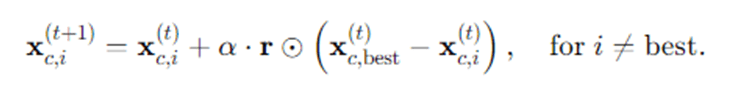

Non-matriarch elephants move toward the matriarch, with a randomized step:

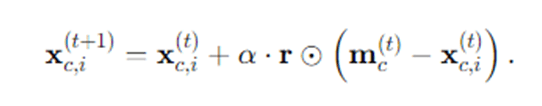

This is a “pull-toward-leader” step with step-size scaled by α and noise vector r. Some variants also blend the clan center:

Both are reasonable; the leader-pull version increases intensification; the center-pull version moderates outliers.

Separating Operator (Exploration)

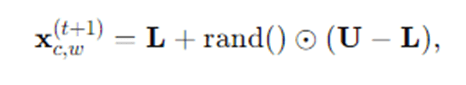

To mimic young males leaving the herd, reinitialize a fraction (often the worst one per clan) to random positions:

Where, w is the index of a low-fitness elephant in clan ccc. This injects diversity and helps escape local minima.

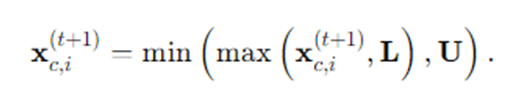

Boundary Handling After updates, clip or repair out-of-bound coordinates:

Constraint handling (if any) can include penalty functions, repair heuristics, or feasibility-first ranking.

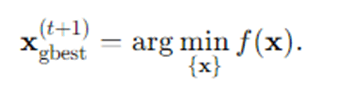

Fitness & Elitism Re-evaluate fitness, update clan bests and global best:

Stopping Criteria Stop when any of the following is met: Max iterations/generations reached.Fitness threshold achieved (f(xgbest)≤ϵ.No improvement for K successive iterations.

Stopping Criteria

Stop when any of the following is met:

- Max iterations/generations reached.

- Fitness threshold achieved (f(xgbest)≤ϵ.

- No improvement for K successive iterations.

Step-by-Step EHO (Explanation)

Check Stopping Criteria: If you’ve reached a max number of iterations or achieved a satisfactory objective value—or if improvement has stalled—stop and report the global best solution. Otherwise, continue with another cycle of clan updating and separating.

Initialize the Search Space: Begin by defining the decision variable bounds for your problem. For each dimension, set lower L and upper U limits. Randomly sample a set of candidate solutions (elephants) uniformly within those bounds. This ensures an unbiased, wide initial coverage of the search space.

Form Clans and Identify Matriarchs: Divide the full population into C clans—small groups of elephants. Within each clan, compute fitness for all elephants and identify the matriarch (the best solution in that clan). This structure creates multiple centers of learning across the population, reducing the risk that the entire search prematurely converges to one region.

Compute Clan Centers: For each clan, compute its average position. The clan center acts like a cohesion force that pulls extreme members back toward the “consensus” of the clan. Updating the matriarch with a blend of her current position and the clan center damps erratic movements while preserving elite information.

Update the Matriarchs: Adjust each matriarch (the clan leader) by mixing her position with the clan center using the β coefficient. This keeps leaders strong but not overly dominant, and it helps stabilize the search when the clan is scattered. Intuitively, the matriarch represents the best known pattern within that clan, and the center anchors that pattern within the clan’s collective experience.

Update Clan Members: Move each non-leader elephant toward the matriarch (or center) with a randomized step. The step magnitude (controlled by α) and randomness (r) ensure that members perform localized exploration around promising solutions. Over time, members converge toward their leader—but because there are multiple clans, convergence happens in several places across the search space simultaneously.

Apply the Separating Operator: To maintain exploration, periodically reinitialize some of the weakest elephants (often the worst in each clan). This “kicks” the search out of local troughs by spawning new candidates in unexplored areas. It’s analogous to simulated annealing’s random jumps or GA’s mutation—except EHO executes it selectively on underperformers and frames it as young elephants leaving the clan.

Handle Bounds and Constraints: After updates, ensure all solutions are feasible. Clamp out-of-range variables to their bounds. If you have constraints (equality/inequality), you can apply a penalty to the fitness when violations occur, or repair the solution by projecting it back to the feasible set. Feasible, well-repaired solutions keep the search valid and meaningful.

Evaluate and Record Elites: Recompute fitness values. Update each clan’s matriarch and the global best solution. Track the best fitness over time; this not only helps with stopping criteria but also provides a convergence curve for analysis and reporting.

Worked Example

A Simple Example: Minimizing the Sphere Function

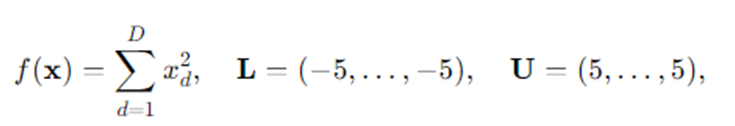

Let’s minimize:

Initialization: Suppose we create N=30 elephants and split them into C=3 clans of 10 each. Sample each x=(x1,x2) uniformly in [−5,5]2.

Evaluate: Compute f(x)=x12+x22 . The lower the value, the better.

Leaders: In each clan, identify the current lowest-value solution (matriarch).

Update:

- Matriarch moves slightly toward the clan center using β (e.g., 0.5).

- Other members move toward the matriarch with step size α (e.g., 0.5) and random vector r.

Separate: Reinitialize the worst one or two elephants (highest f) in each clan back in [−5,5]2.

Repeat: Iterate 100–200 generations. You’ll typically see the global best marching toward (0,0), the known minimizer.

This toy example illustrates how EHO compresses the population around good areas while constantly testing new regions via separating.

Advantages and Disadvantages of EHO

Advantages

- Simplicity: EHO has a small set of intuitive parameters (α,β, number of clans, clan sizes) and straightforward update rules.

- Balanced Exploration–Exploitation: Clan movement concentrates search near promising regions (exploitation), while the separating operator injects fresh individuals (exploration).

- Multi-cluster Search: Clans act like parallel sub-populations, naturally covering multiple basins of attraction and lowering the risk of premature convergence.

- Parallelizable: Fitness evaluations and clan updates can be done in parallel, which is valuable for expensive objective functions.

- Versatile: With minor adjustments, EHO can handle discrete variables, mixed domains, constraints, and multi-objective variants.

Disadvantages

- Scaling to Very High Dimensions: As dimensionality grows, exploration becomes more difficult; EHO typically still works, but may require larger populations, more iterations, or hybridization with local search.

- Parameter Sensitivity: The balance between α and β, as well as how aggressively you apply separating, can influence convergence speed and final solution quality.

- No Guarantee of Global Optimality: Like most metaheuristics, EHO is heuristic: it offers good solutions in practice but no formal global-optimality guarantees on complex landscapes.

- Potential for Stagnation: If separating is too weak or α is too small, EHO can stagnate around suboptimal regions. Conversely, too aggressive exploration can slow convergence.

- Constraint Handling Complexity: Real-world constraints often demand penalty design or repair heuristics, which require domain insight and tuning.

Applications of EHO

EHO’s structure lends itself to a wide variety of real-world tasks:

- Engineering Design Optimization: Truss sizing, aerodynamic shape tuning, structural optimization under stress/weight constraints, and controller parameter tuning.

- Machine Learning & Data Mining: Feature selection, SVM or neural network hyperparameter tuning, clustering (e.g., optimizing cluster centers), ensemble weighting.

- Wireless Sensor Networks & IoT: Energy-aware routing, cluster-head selection, duty cycling, and coverage optimization—EHO’s clan metaphor maps nicely to clustered network topologies.

- Scheduling & Logistics: Job-shop scheduling, vehicle routing with time windows, inventory control, and resource allocation.

- Energy Systems: Economic dispatch, unit commitment, PV/WT sizing, microgrid control parameter tuning, and demand response optimization.

- Image Processing & Computer Vision: Multilevel thresholding, edge detection parameter tuning, image segmentation.

- Finance: Portfolio selection, risk-return balancing, parameter fitting in quantitative models.

- Control Systems: PID tuning, robust controller design, model predictive control parameter optimization.

Conclusion

Elephant Herding Optimization leverages two elegant ideas from elephant society: cohesive learning within clans under a matriarch and separation to explore new grounds. This dance between intensification (move toward leaders and centers) and diversification (reinitialize weak performers) gives EHO a practical, flexible, and often competitive edge on real-world optimization problems where gradients are unavailable or unreliable.

For practitioners, EHO hits a sweet spot: it’s easy to implement, easy to reason about, and adaptable to many domains. Success hinges on mindful parameter choices—particularly α, β, clan sizing, and how aggressively you apply the separating operator—as well as thoughtful constraint handling. In many applications, EHO also plays well in hybrid setups (e.g., EHO for global exploration followed by a local solver for fine-tuning), often boosting both convergence speed and final accuracy.

Five Frequently Asked Questions (FAQs)

Q1. How do I choose the number of clans and clan size?

There’s no one-size-fits-all rule, but a common starting point is 3–5 clans with equal sizes. If your problem is highly multi-modal, more clans (with smaller sizes) can increase coverage; if evaluations are expensive, fewer clans with modest size may be more efficient. Start simple (e.g., 3 clans, 10 elephants each) and tune empirically.

Q2. What values should I use for α and β?

Typical ranges are α∈[0.3,0.8] and β∈[0.3,0.8]. Larger α intensifies movement toward the leader (faster convergence, higher risk of getting stuck); larger β keeps matriarchs closer to their previous position (more stability). A practical approach is to start with α≈0.5,β≈0.5 and adjust based on observed convergence speed and diversity.

Q3. How often should I apply the separating operator?

A common tactic is every iteration reinitialize the single worst elephant in each clan, or every few iterations reinitialize a fraction (e.g., 5–10%) of the global population. If you see premature convergence, increase the separating intensity or frequency. If the search looks too noisy, reduce it.

Q4. Can EHO handle constraints and discrete variables? Yes. For constraints, use penalty functions (penalize infeasible solutions), feasibility-first sorting, or custom repair operators (project back to feasibility). For discrete problems, round/encode variables or design discrete move operators (e.g., swap, insert). The clan and separating concepts still apply.

Q5. How does EHO compare to PSO or GA? EHO is conceptually closer to PSO (leader-guided moves) than GA (crossover/mutation). Unlike PSO, EHO explicitly partitions the population into clans and actively reinitializes weak members via separating. Many users find EHO easier to tune than GA, with behavior somewhere between PSO’s social learning and GA’s exploration. In practice, performance is problem-dependent—try both and compare.