Introduction

Cloud computing has evolved from a buzzword into a critical pillar of modern digital infrastructure. As organizations increasingly rely on virtualized environments for storage, computing, and deployment, the need for robust, innovative, and secure cloud systems is more urgent than ever. While cloud services offer flexibility, scalability, and cost-efficiency, they also bring a slew of technical and research-related challenges. These challenges represent opportunities for PhD and postgraduate scholars to make impactful contributions to the field. In this post, we explore the top 10 research challenges in cloud computing, provide topic suggestions, and offer guidance for selecting future-ready research problems.

Cloud Security and Privacy

As enterprises increasingly migrate sensitive workloads and large-scale datasets to public and hybrid cloud platforms, the attack surface of cloud infrastructure expands proportionally. Despite being one of the most extensively studied areas, cloud security and privacy remain unresolved due to the complex interplay of distributed systems, virtualized resources, and shared tenancy models. These factors expose cloud environments to a growing number of vulnerabilities including unauthorized access, data exfiltration, man-in-the-middle attacks, advanced persistent threats (APT), and malicious insider activities.

Open Research Challenges:

- Secure Multi-Tenancy Models: Designing isolation mechanisms that prevent side-channel attacks, hypervisor escapes, and data leakage among co-located tenants in virtualized infrastructures without compromising scalability or resource optimization.

- Zero-Knowledge Authentication in Cloud Storage: Developing zero-knowledge proof (ZKP)-based protocols for cloud authentication that allow users to prove their identity without disclosing credentials or metadata, preserving data confidentiality during access requests.

- Lightweight Cryptographic Algorithms for Cloud and Edge Systems: Constructing energy-efficient, low-latency cryptographic schemes suitable for integration into cloud-edge architectures and IoT-enabled environments, where computational overhead must be minimized.

- Insider Threat Detection Using Advanced Machine Learning: Employing behavior analytics, federated learning, and graph-based anomaly detection models to identify insider threats in real time while maintaining user privacy and system performance.

Future Research Directions:

- Zero Trust Architecture (ZTA): Implementation of identity-aware, micro-segmented, and continuously verified access control frameworks across all layers of cloud infrastructure. Research can target dynamic policy enforcement, contextual trust scoring, and automated risk assessment.

- Homomorphic Encryption and Secure Computation: Exploration of practical homomorphic encryption techniques and secure multiparty computation (SMPC) to enable data processing and analytics on encrypted datasets without prior decryption, addressing data-in-use vulnerabilities.

- Secure Interoperability in Multi-Cloud and Hybrid Cloud Environments: Development of blockchain-based data sharing frameworks, attribute-based access control (ABAC), and secure API gateways to facilitate encrypted, auditable, and policy-compliant data exchange across federated cloud ecosystems.

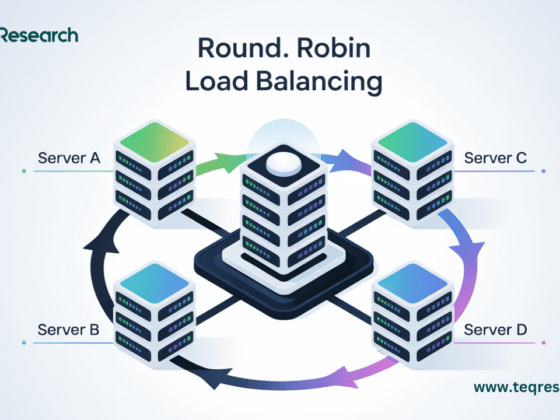

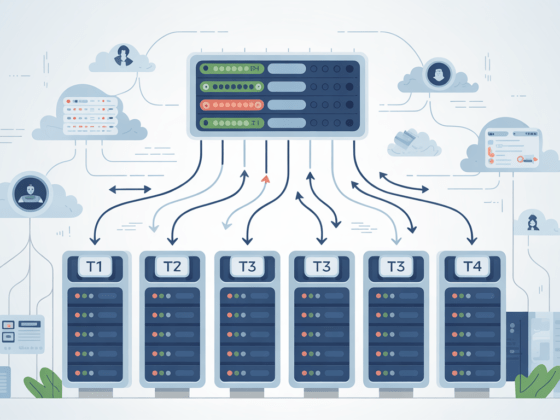

Resource Management and Scheduling in Cloud Environments

Efficient resource management is the cornerstone of high-performing cloud environments. Given the elastic and on-demand nature of cloud services, improper provisioning can result in either resource underutilization (leading to revenue loss and inefficiencies) or over-provisioning (causing increased operational costs and energy waste). Addressing heterogeneous resource types, multi-tenant demands, and dynamic workload patterns requires intelligent, adaptive scheduling strategies that meet Service Level Agreements (SLAs) while optimizing cost and performance.

Key Technical Challenges:

- SLA-Aware Resource Allocation: Guaranteeing SLA compliance under dynamic workloads, network delays, and infrastructure failures remains a major research hurdle, especially in real-time service-oriented architectures.

- Real-Time and Latency-Constrained Job Scheduling: Developing low-latency, deadline-aware job scheduling algorithms for time-sensitive applications such as video streaming, online gaming, or scientific simulations is critical in high-load environments.

- Energy-Aware Virtual Machine (VM) Placement: Balancing compute performance and energy efficiency by optimizing VM placement across physical hosts to reduce power consumption and thermal hotspots without affecting QoS.

- Resource Contention in Multi-Tenant Clouds: Preventing performance degradation due to noisy neighbors and shared resource contention, especially in public cloud IaaS environments where VM isolation is not fully deterministic.

Emerging Research Directions and Opportunities:

- AI-Based Workload Prediction and Resource Forecasting: Applying deep learning and time series models (e.g., LSTM, ARIMA, Transformer architectures) to predict workload spikes and proactively scale resources to maintain QoS while minimizing over-provisioning.

- Game-Theory-Based Scheduling Models: Leveraging cooperative and non-cooperative game theory to model resource competition among multiple tenants and optimize allocation strategies that ensure fairness, efficiency, and strategic equilibrium.

- Bio-Inspired Metaheuristic Optimization: Utilizing evolutionary algorithms and swarm intelligence approaches—such as Ant Colony Optimization (ACO), Particle Swarm Optimization (PSO), and Genetic Algorithms (GA)—for solving NP-hard scheduling problems in large-scale cloud infrastructures.

- Reinforcement Learning for Adaptive Scheduling: Designing RL-based agents that learn optimal scheduling policies through reward functions aligned with energy, cost, and performance trade-offs in dynamic cloud ecosystems.

- Fog/Edge-Aware Resource Offloading: Exploring hierarchical resource scheduling techniques that consider cloud–edge–IoT paradigms to offload latency-critical tasks to edge nodes while bulk processing remains cloud-resident.

Cloud Scalability and Elasticity

Scalability in cloud computing denotes a system’s capacity to grow—by increasing compute, storage, or networking resources—to handle rising workloads efficiently. Elasticity, on the other hand, implies the dynamic provisioning and de-provisioning of resources in real-time based on workload demand. Achieving both efficiently in heterogeneous and distributed cloud environments remains a complex engineering and research challenge, particularly under fluctuating, bursty, or unpredictable workloads.

Why It’s Technically Challenging:

- Horizontal vs. Vertical Scaling Trade-offs: Horizontal scaling (adding instances) offers resilience but incurs synchronization and consistency overheads, whereas vertical scaling (upgrading resources on a single instance) risks single points of failure and hardware limitations.

- Auto-Scaling Latency and Instability: Existing auto-scaling mechanisms often rely on threshold-based policies, which can suffer from delayed reaction times, instability, or over/under-provisioning during sudden workload shifts or flash crowds.

- Resource Contention and Performance Degradation: During high-concurrency events, auto-scaled instances can experience shared resource contention, especially in IaaS or containerized environments, leading to increased tail latencies and SLA violations.

Research Opportunities and Future Directions:

- Auto-Scaling Using Predictive Analytics and Time-Series Forecasting: Integration of machine learning (e.g., LSTM, Prophet, ARIMA, GRU) for workload forecasting enables proactive scaling decisions instead of reactive threshold-based methods, thereby reducing latency and improving SLA adherence.

- Serverless and Function-as-a-Service (FaaS) Optimization: Investigate the cold-start latency, resource allocation granularity, and function-chaining efficiency in serverless environments to enhance fine-grained, event-driven scalability.

- Elastic Container Orchestration with Kubernetes and Beyond: Research into adaptive container orchestration platforms (e.g., K8s, OpenShift, Nomad) that optimize pod scaling based on real-time metrics, inter-service dependencies, and multi-cluster deployments in hybrid/multi-cloud architectures.

- Multi-Dimensional Elasticity Models: Develop elasticity models that consider not only compute and memory but also I/O throughput, bandwidth, network topology, and inter-service communication latency for microservice-based architectures.

- Self-Adaptive Scaling with Reinforcement Learning: Applying deep reinforcement learning (e.g., Deep Q-Networks, Proximal Policy Optimization) to learn optimal scaling strategies from environment feedback, workload trends, and cost-performance trade-offs.

- Scalability in Distributed Data-Intensive Workloads: Addressing the challenges of scaling data pipelines, such as distributed file systems (e.g., HDFS), streaming frameworks (e.g., Apache Flink/Spark), and NoSQL databases under elastic conditions.

Interoperability and Portability Across Cloud Platforms

Interoperability refers to the ability of diverse cloud systems to work together seamlessly, while portability is the ease with which applications and data can be migrated across different cloud platforms. Despite the growing adoption of multi-cloud and hybrid-cloud strategies, vendor lock-in remains a critical issue due to proprietary APIs, non-standard service interfaces, and incompatible orchestration models. Ensuring cross-cloud operability is crucial for achieving true flexibility, avoiding service monopolies, and enabling robust disaster recovery and workload distribution.

Core Research Challenges:

- Lack of Standardized APIs and Service Descriptors: Proprietary APIs, authentication protocols, and service-specific implementations hinder cross-cloud automation and orchestration. Unified API layers that abstract provider-specific interfaces are still under active investigation.

- Heterogeneous Runtime Environments and Container Migration: Migrating containerized workloads (e.g., Docker/Kubernetes) across providers with varying support for storage backends, networking policies, and orchestration plugins presents compatibility and performance issues.

- Cross-Cloud Data Transformation and Schema Mapping: Cloud services often have incompatible data models, serialization formats, and storage structures. Transforming and synchronizing data without loss or inconsistency during migration or integration remains non-trivial.

Open Research Problems:

- Standardized Cross-Cloud API Frameworks: Development of cloud-agnostic APIs using specifications like OpenAPI, TOSCA (Topology and Orchestration Specification for Cloud Applications), or Cloud Infrastructure Management Interface (CIMI) to facilitate uniform access across platforms.

- Container and VM Portability Across Diverse Hypervisors

Investigating mechanisms for runtime migration and compatibility between container runtimes (Docker, containerd, CRI-O) and hypervisors (KVM, Xen, Hyper-V) used by AWS, Azure, GCP, and private clouds. - Semantic Interoperability and Ontology-Driven Cloud Integration: Designing ontology-based frameworks that ensure semantic consistency in service descriptions, data schemas, and metadata across disparate cloud environments.

Future Research Directions:

- Cross-Cloud Middleware and Broker Systems: Developing intelligent middleware or cloud service brokers capable of translating and routing service calls between heterogeneous platforms in real time, while handling failover, SLA enforcement, and load balancing.

- Multi-Cloud Orchestration Frameworks: Enhancing orchestration engines (e.g., Terraform, Pulumi, Apache Brooklyn) to support declarative, provider-agnostic configurations and real-time topology reconfiguration during runtime.

- Policy-Based Cloud Portability Models: Implementing policy-driven orchestration that allows applications to shift between providers based on cost, compliance, performance, or geographic considerations using automated decision logic.

- Interoperability Testing and Benchmarking Standards: Creating standardized testbeds and benchmarks to evaluate the interoperability and portability performance of cloud-native applications across AWS, Azure, GCP, and OpenStack environments.

Efficient Cloud Storage and Data Management

Modern cloud storage systems must ensure high throughput, low latency, and fault tolerance while managing petabyte-scale, high-velocity data across distributed environments.

Key Technical Challenges:

- Low-Overhead Data Deduplication: Achieving inline or post-process deduplication without compromising I/O performance or increasing metadata complexity.

- Scalable and Distributed File Systems: Designing file systems that support horizontal scaling, global namespaces, and strong consistency guarantees (e.g., object stores, distributed POSIX systems).

- Consistency Models in Geo-Distributed Storage: Balancing CAP theorem trade-offs (Consistency, Availability, Partition Tolerance) in systems spanning multiple data centers and regions.

Emerging Research Directions:

- Blockchain-Based Data Integrity Verification: Leveraging tamper-proof ledgers for ensuring verifiable audit trails and immutable cloud data states.

- Decentralized Storage Architectures (e.g., IPFS, Filecoin): Exploring peer-to-peer models for content-addressable storage, redundancy, and resilience without centralized control.

- Edge-Aware Data Tiering and Predictive Caching: Integrating edge and fog nodes for latency-aware storage decisions, dynamic caching, and data locality optimization.

Fault Tolerance and Disaster Recovery

Resilience in cloud infrastructures requires seamless failure handling, minimal downtime, and robust recovery mechanisms across geo-distributed environments.

Core Research Challenges:

- Automated and Granular Fault Detection: Designing AI/ML models for anomaly detection, root cause analysis, and fine-grained fault localization in real time.

- High-Speed, Consistent Replication Mechanisms: Optimizing synchronous and asynchronous data replication techniques to balance consistency, latency, and bandwidth in distributed storage and compute systems.

- Multi-Cloud DR (Disaster Recovery) Strategy Optimization: Coordinating backup, recovery point objectives (RPO), and failover mechanisms across heterogeneous cloud providers.

Future Research Scope:

- AI-Driven Self-Healing Architectures: Developing autonomous systems that can detect, isolate, and recover from faults with minimal human intervention.

- Predictive Failure Modeling: Leveraging deep learning for forecasting hardware/software failures using telemetry, logs, and workload metrics.

- Policy-Based DR Automation Frameworks: Implementing dynamic, SLA-driven disaster recovery orchestration across public, private, and hybrid cloud environments.

Energy-Efficient Cloud Computing

The exponential growth of cloud services has escalated power consumption in hyperscale data centers, raising concerns about carbon footprint and energy costs.

Key Research Challenges:

- VM-Level Power Optimization: Designing dynamic resource scaling and CPU frequency tuning to minimize energy without violating QoS/SLA.

- Integration of Renewable Energy Sources: Aligning workload scheduling with the availability of solar, wind, and other renewable energy inputs using predictive analytics.

- Edge and Fog Computing for Energy Offloading: Investigating distributed workload placement strategies to shift processing from centralized clouds to energy-efficient edge nodes.

Potential Research Directions:

- Green Cloud Architecture Design: Creating energy-aware infrastructure frameworks that optimize power consumption across compute, storage, and networking layers.

- Thermal-Aware Resource Scheduling: Incorporating real-time thermal profiling to prevent hotspots and reduce cooling requirements through heat-efficient VM allocation.

- AI-Driven Power Prediction Models: Employing machine learning to forecast energy demands, enabling proactive load balancing and dynamic power provisioning.

Edge and Fog Integration with the Cloud

The convergence of edge, fog, and cloud computing enables real-time analytics and low-latency services, but introduces complex challenges in orchestration, synchronization, and security.

Critical Research Challenges:

- Real-Time Data Synchronization Across Layers: Ensuring consistent data states between edge devices, fog nodes, and centralized cloud in high-throughput, heterogeneous environments.

- Latency-Aware, Context-Driven Resource Allocation: Dynamically provisioning compute and storage resources based on proximity, bandwidth, and workload characteristics.

- Security and Privacy in Distributed Edge Nodes: Protecting lightweight devices from attacks while preserving data integrity and confidentiality in untrusted edge environments.

Emerging Research Topics:

- Federated Learning in Fog-Cloud Architectures: Training decentralized AI models across edge nodes without sharing raw data, enabling privacy-preserving intelligence at scale.

- Trust and Reputation Models for Edge-Cloud Systems: Developing blockchain-based or cryptographic trust frameworks to ensure node reliability and secure cross-layer communication.

- Lightweight Microservices Orchestration at the Edge: Optimizing service discovery, deployment, and scaling of containerized microservices in resource-constrained edge environments.

Cloud-based AI/ML Model Training and Deployment

Cloud infrastructure enables scalable AI/ML pipelines, but presents challenges in computation cost, data security, and distributed system coordination during training and deployment.

Key Research Challenges:

- Cost-Efficient Training of Large-Scale Models: Optimizing GPU/TPU utilization, spot instance scheduling, and mixed-precision training to reduce cloud compute costs.

- Scalable Distributed Machine Learning Frameworks: Enhancing the efficiency of data-parallel and model-parallel strategies across multi-cloud or hybrid clusters (e.g., Horovod, Ray, DeepSpeed).

- Robustness Against Model Threats: Addressing vulnerabilities to adversarial attacks, model inversion, and data poisoning in shared and hosted model environments.

Emerging Research Directions:

- Serverless and Event-Driven AI Training Pipelines: Utilizing FaaS (Function-as-a-Service) for dynamic, scalable, and cost-effective ML model training and inference workloads.

- Secure Multi-Party Computation in Federated Learning: Preserving data privacy while training distributed models across multiple organizations using homomorphic encryption or secret sharing.

- Model Lifecycle Management and Version Control: Developing tools for continuous delivery, rollback, auditing, and compatibility tracking of ML models across cloud environments.

Legal, Ethical, and Governance Issues in the Cloud

As cloud adoption accelerates across jurisdictions, addressing cross-border data governance, ethical AI usage, and regulatory compliance becomes a critical design priority.

Unresolved Legal and Ethical Questions:

- Data Ownership in Multi-Tenant Architectures: Determining legal control and custodianship over data stored and processed in shared cloud environments.

- Cross-Jurisdictional GDPR and Regulatory Enforcement: Ensuring consistent enforcement of privacy laws (e.g., GDPR, HIPAA, CCPA) across globally distributed cloud data centers.

- Ethical Governance of Cloud-Hosted AI Services: Addressing transparency, accountability, and fairness in decision-making systems deployed on opaque, managed platforms.

Advanced Research Directions:

- Data Sovereignty Enforcement Models: Implementing mechanisms to restrict data movement and processing within specific geopolitical boundaries using geofencing and policy tags.

- Privacy-by-Design Cloud Frameworks: Embedding privacy principles (e.g., differential privacy, anonymization) into cloud-native applications and storage systems by default.

- Policy-Aware and Auditable Data Transfer Mechanisms: Developing intelligent data pipelines that enforce compliance policies dynamically during inter-region transfers and third-party sharing.

Conclusion

Cloud computing research isn’t just about fixing bugs—it’s about shaping the infrastructure of the digital future. Whether you’re looking at security, energy efficiency, AI integration, or multi-cloud interoperability, the opportunities are abundant. By tackling these open challenges, researchers can contribute not just to academia but to real-world systems used by millions. Choose a research problem that inspires you, aligns with your career goals, and pushes the envelope of what’s possible.

1 comment

1. All Topic Contents are closely related to a new Research Topics as well as Problems especially in Cloud Computing Environments.

2. It is inspired to how will enhanced the research work along with the combination of the other research area like Machine Learning, AI , Edge , Cloud and Fog Computing.

3. It is very useful for in future research work how will be to enhance , finding new research problems in multi cloud environments , Security wise , Storage wise, legal and ethical issues in mulicloud parties and Computing. So, your work key points definitely helped to new researchers, Ph.D Research Scholars, Professors and academicians.