Edge computing is revolutionizing data handling and processing in the current digital era by bringing computation closer to the data source. This paradigm shift is crucial for applications that demand low latency, such as driverless cars, smart cities, and real-time analytics. However, efficiently managing and optimizing resources becomes a significant challenge as edge computing systems get more complex. One of the primary issues with edge computing is resource allocation. Unlike traditional cloud computing environments, which contain relatively large and centrally controlled resources, edge environments are characterized by a diverse array of devices with varying capabilities and irregular connectivity.

Static resource allocation methods are therefore inadequate and may lead to inefficiencies and performance snags. Presenting dynamic resource allocation, a strategy designed to get around these challenges by quickly adapting resources to demands and situations. This methodology continuously adapts to changing workloads and external variables in an effort to increase efficiency, reduce latency, and maximize resource consumption. In this blog post, we’ll examine the concept of dynamic resource allocation in edge computing. We’ll examine its basic concepts, review its benefits and procedures, and examine real-world applications that demonstrate its importance. You ought to have a clearer understanding of why dynamic resource allocation is essential to the future of edge computing after reading this article, as well as how it could promote productivity and creativity in a variety of industries.

What is Edge Computing?

Edge computing is a groundbreaking approach that decentralizes computing resources and moves data processing and analysis closer to the locations of generation and consumption. Unlike traditional cloud computing, which relies on centralized data centers, edge computing manages data locally on or near the data sources, including local servers, Internet of Things sensors, or mobile devices. For real-time applications, this proximity reduces latency and speeds up response times. By distributing computing tasks throughout a network of edge devices rather than a single centralized location, edge computing increases system scalability and resilience. Furthermore, by sending less data to the cloud, it optimizes data bandwidth, leading to a more efficient use of network resources.

Because it enables quick decision-making, edge real-time data processing is essential for applications like autonomous automobiles, smart city infrastructure, and industrial automation. Additionally, edge computing can enhance security and privacy by managing sensitive data locally and reducing the likelihood of data breaches during transmission. Since edge computing offers several benefits like reduced network congestion, faster response times, enhanced security, and more dependability, it generally plays a significant role in enabling modern, data-intensive applications.

Dynamic Resource Allocation and Its Importance

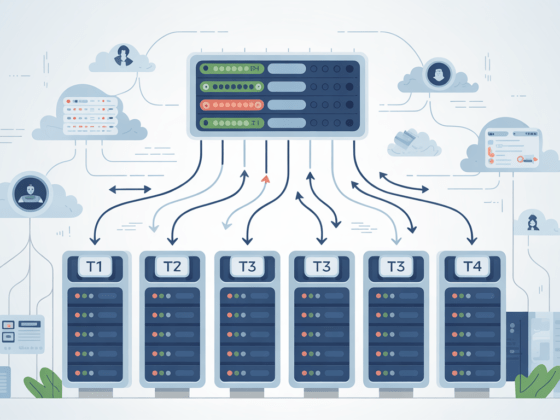

Dynamic resource allocation is the process of changing how computing resources—like CPU, memory, storage, and network bandwidth—are distributed in real-time in response to system demands and conditions. Unlike static allocation, which establishes and fixes resources, dynamic allocation continuously adapts to changing demand. This ensures that resources are always used as effectively as possible.

How It Works:

- Real-Time Monitoring: The system continuously tracks resource usage, performance indicators, and application workloads.

- Adaptive Management: Based on the data collected, resources are reallocated, scaled up or down, or changed to meet present demands.

- Decision-Making Algorithms: Various algorithms assess data to determine the best allocation strategy. These include, for instance, AI-driven forecasts, optimization models, and heuristic guidance.

- Implementation: Among other dynamic resource modifications, the system adds more processing power during peak times or reallocates workloads to balance loads.

Importance of Dynamic Resource Allocation:

- Enhanced Efficiency: By constantly adapting resources to meet evolving needs, dynamic allocation ensures that they are used as efficiently as feasible. By preventing overuse and underuse, this enhances overall system performance.

- Reduced Latency: By constantly adapting resources to meet evolving needs, dynamic allocation ensures that they are used as efficiently as feasible. By preventing overuse and underuse, this enhances overall system performance.

- Cost Savings: By avoiding overprovisioning, dynamic allocation reduces costs. Operating costs are reduced and less capacity is wasted since resources are allocated based on actual demand. Given that resources are usually limited, this is particularly important in situations where edge computing is utilized.

- Scalability: As edge networks grow and workloads shift, manual resource management becomes unfeasible. Dynamic allocation systems are easily scalable and can adjust to shifting loads without the assistance of a human.

- Improved Reliability: By reallocating resources in response to malfunctions or performance issues, dynamic allocation helps to increase fault tolerance and system reliability. It helps to ensure continuous operation by adapting to unforeseen changes or disruptions.

- Flexibility: The flexibility that dynamic resource allocation provides can support a variety of applications and services. It can quickly adapt to shifting workload patterns or new requirements while supporting a wide range of edge computing use cases.

Dynamic resource allocation is crucial in edge computing contexts, where systems must operate dependably and efficiently and resource needs are very variable. Through real-time resource adaption, it ensures optimal performance, cost-efficiency, and scalability, meeting the demands of modern distributed computing applications.

Methods for Dynamic Resource Allocation

Several techniques are used in dynamic resource allocation to optimize and modify resource distribution in real time. These are the main techniques, examined in detail:

1. Resource Monitoring and Feedback Systems

- Real-Time Monitoring: tracks information in real time, such as application performance, network traffic, memory usage, and CPU load. Platforms and technologies such as cloud-native monitoring systems, Prometheus, and Grafana provide insights and alarms based on real-time data.

- Feedback Mechanisms: employs monitoring data to give the allocation system information so it can determine the optimal way to modify resources.

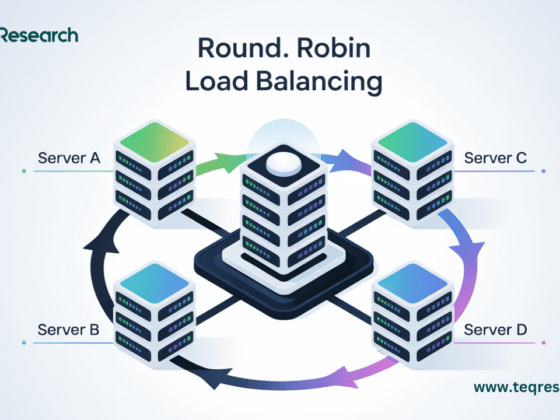

- Round-Robin Load Balancing: systematically distributes incoming requests or tasks among the available resources. Although it is simple to use and effective, it disregards resource usage.

- Least Connections: distributes the load more equitably and keeps any one resource from becoming overwhelmed by sending new requests to the resource with the fewest open connections.

- Weighted Load Balancing: assigns different weights to resources based on their capacity or efficacy. ensures that resources with higher capability can handle a larger percentage of the workload by allocating workloads according to these weights.

3. Autoscaling

- Vertical Autoscaling modifies the resources (such CPU and RAM) allocated to a single instance in accordance with demand. beneficial in circumstances where scaling out is impractical.

- Horizontal Autoscaling: installs or removes instances of a service based on load. By scaling out (adding additional instances) or scaling in (dropping instances), this strategy helps effectively manage varying workloads.

4. Resource Provisioning

- Dynamic Provisioning: this method distributes resources as needed rather than allocating a fixed amount of resources beforehand. It ensures that resources are available when needed and are not wasted when demand is low.

- Resource pooling: combines resources from multiple servers or edge devices to create a shared pool. By allowing for variable allocation based on current demand, this technique enhances resource usage across the network.

5. Heuristic-Based Allocation

- Rule-Based Systems: allocate resources based on preset criteria or thresholds using pre-established standards. The system may automatically allocate more resources if the CPU utilization exceeds 80%.

- Optimization Models: using mathematical models to determine how best to allocate resources in light of various constraints and goals. This method considers factors such as cost, performance, and capacity.

6. AI and Machine Learning

- Predictive Analytics: uses historical data and machine learning techniques to predict future resource requirements. By predicting patterns, the system can proactively adjust resources to meet anticipated demands.

- Adaptive Algorithms: employs AI-driven algorithms to learn from historical and real-time data, continuously improving allocation strategies. These algorithms are better equipped to allocate resources effectively and adjust to new trends.

7. Container Orchestration

- Kubernetes: oversees containerized apps and uses demand-driven automation to assign resources. It has functions like resource quotas, load balancing, and autoscaling.

- Docker Swarm: enables effective resource management and native clustering for Docker containers, enabling the deployment of container workloads among nodes.

8. Resource Scheduling

- Task Scheduling: Arranges tasks and workloads based on resource availability and demand. It ensures that tasks are carried out efficiently and that resources are utilized to their maximum capacity.

- Importance-Based Scheduling: Assigns a level of importance to tasks or applications to ensure that critical tasks receive the resources they require, even during times of high demand.

When combined, these techniques help allocate resources dynamically in an efficient manner, allowing systems to adjust to changing workloads, maximize efficiency, and guarantee resource efficiency in edge computing environments.

Challenges and Considerations

1. Scalability Issues:

- Complexity: Managing dynamic resource allocation becomes more difficult as the number of edge devices and apps rises. Ensuring scalable solutions that can endure large deployments without experiencing performance degradation is challenging.

- Coordination: Complex algorithms and communication protocols are required to distribute resources among a distributed network of edge devices.

2. Latency and Performance:

- Real-Time Adjustments: It is essential to ensure that modifications to the distribution of resources occur immediately and do not exacerbate the delay that currently exists. Delays in resource reallocation can impact performance, particularly in applications where latency is an issue.

- Overhead: Since the overhead of continuously monitoring and adjusting resources may have an impact on the system’s overall performance, effective implementation solutions are required.

3. Resource Constraints:

- Limited Resources: Edge devices often have fewer processing and storage capacities than centralized cloud services. Effective dynamic allocation must function under specific constraints in order to avoid resource exhaustion.

- Heterogeneity: Because different edge devices have varying resource capacities, allocation strategies may become more complicated, requiring adaptive approaches that consider a range of device profiles.

4. Security Concerns:

- Data Privacy: Regular data exchanges linked to dynamic allocation may result in security and privacy issues. Protecting sensitive data and offering a secure connection are essential.

- Attack Surface: The increasing complexity of dynamic resource management necessitates strong security measures since it can create new vulnerabilities and increase the attack surface.

5. Integration Challenges:

- Legacy Systems: Combining dynamic resource allocation with both the current infrastructure and legacy systems might be difficult. Compatibility issues could arise, requiring solutions that function well in current settings.

- Interoperability: Especially in heterogeneous settings, it can be challenging to ensure that dynamic allocation strategies work well across a variety of platforms, devices, and applications.

6. Cost Management:

- Operational Costs: While resource optimization through dynamic allocation may result in cost savings, implementation and administration costs might be high up front and ongoing. It’s important to balance these costs and benefits.

- Efficiency vs. Cost: To guarantee that dynamic allocation strategies are dependable, effective, and economical, planning and resource management are essential.

7. Algorithm Complexity:

- Making Decisions: Developing algorithms that accurately predict and respond to changing demands may prove challenging. Overly simplistic models might not be able to effectively handle real-world situations, while more sophisticated models might increase computation costs.

- Tuning: To attain optimal performance and resource consumption, algorithms must be regularly modified and improved, which might require a significant amount of resources.

To effectively meet the needs of edge computing environments while maintaining performance, security, and cost-efficiency, addressing these difficulties requires a combination of cutting-edge technology, strategic planning, and ongoing monitoring.

Conclusion

Dynamic resource allocation is crucial for optimizing performance and efficiency in edge computing environments. Through real-time dynamic resource distribution adjustments, it addresses the issues of fluctuating workloads, limited resources, and stringent latency requirements. For modern distributed computing systems, this flexibility is crucial since it ensures scalability and cost savings while simultaneously enhancing system efficiency. While there are benefits to dynamic resource allocation, there are also disadvantages, including problems with scalability, latency, and security.

Sophisticated algorithms, robust security measures, and effective integration strategies are required to overcome these challenges. As edge computing advances, dynamic resource allocation will be crucial to preserving systems’ responsiveness, effectiveness, and capacity to meet a range of changing demands. In summary, dynamic resource allocation can significantly increase the performance and durability of edge computing systems, paving the way for more flexible, reasonably priced, and scalable solutions across a range of industries.